This blog post is about building AI systems for regulated industries — healthcare, banking, insurance, and other places where “ship fast and iterate” gets you a subpoena.

The Air Canada Precedent

In February 2024, a man named Jake Moffatt asked an Air Canada chatbot about bereavement fares. The chatbot told him he could book a regular ticket and apply for a bereavement refund within 90 days. He did. Air Canada refused the refund, citing its actual policy, which the chatbot had got wrong. Moffatt took them to a small-claims tribunal in British Columbia. Air Canada argued, in essence, that it should not be liable for what its chatbot said — that the chatbot was a separate informational source, distinct from Air Canada itself. The tribunal disagreed. It ruled in favour of Moffatt and ordered Air Canada to honour what its chatbot had said. (Moffatt v. Air Canada, 2024 BCCRT 149)

The amount Moffatt was awarded was $812.02 Canadian. Legally, it was a small contract decision in one Canadian province — not a sweeping precedent on AI liability, no matter how it was reported. But as a signal of how courts and tribunals are starting to treat the output of AI systems, it is hard to ignore. A company saying “the chatbot did it, not us” is not a defence anyone wants to test in front of a regulator with broader powers.

Most AI commentary you’ll read online is written by, and for, people building things where the cost of being wrong is annoying. A chatbot gives a bad recipe. A coding assistant suggests a deprecated function. A marketing tool writes a weird subject line. The user shrugs, regenerates, and moves on. Air Canada’s mistake — and the reason it’s a useful starting point — is that it sat exactly on the boundary between annoying and legally consequential, and a tribunal decided which side of that boundary it was on. For about $1,000 and one customer.

Now, picture the same incident in a hospital. Or a bank’s payment system. Or a clinical trial recruitment platform. The boundary doesn’t exist. There is only the legally consequential side.

This series is for rooms where only the legally consequential side is present.

The Asymmetry

The defining feature of regulated AI is that the cost of being wrong is asymmetric.

Tens of thousands of correct outputs get you no upside. The system is supposed to work. Nobody throws a parade when a clinical decision support tool flags the right drug interaction or a payment-routing model correctly classifies a transaction. That’s the baseline. That’s why you bought the product.

One catastrophically wrong output, on the other hand, gets you front-page news, a regulator’s attention, and a board meeting nobody wants to attend. A clinical decision support system that recommends a contraindicated medication doesn’t just embarrass the vendor — it can harm a patient, trigger a reportable safety event, open a liability case, and require regulatory impact assessment or submission review. A KYC model that misclassifies a high-risk transaction in a CBUAE-regulated payment hub doesn’t just create a refund ticket — it can trigger a regulatory inquiry, a suspicious activity report, and a multi-million-dirham penalty. An underwriting model that produces disparate outcomes across protected classes doesn’t just lose customers — it invites a discrimination suit and a regulator’s audit of every other model on your shelf.

The asymmetry is structural. The downside dominates the expected value calculation in a way that no upside can offset. This changes everything about how the AI gets built. Not the model selection. Not the prompt engineering. Not the RAG architecture. Everything.

Regulator in the Room (Physics Constraints)

Five things change the moment your AI system enters a regulated industry. None of them is purely technical — but every one of them changes the architecture.

The regulator has veto power, regardless of market success. In consumer AI, the user is the customer; if they don’t like it, they leave. In regulated AI, the regulator sits behind the user with a different kind of power — not a vote with their wallet, but the authority to halt your product, mandate a recall, or refer your conduct for investigation. They have read your incident reports. They have read your vendor’s incident reports. They have a copy of your validation protocol, and they remember the version number. The user can love your product. The regulator can shut it down.

Documentation is the deliverable, not the overhead. A clean GitHub repo and a working demo are not a product in healthcare or banking. The product is the system (model) plus the evidence file, which includes the validation protocol, training data lineage, failure mode analysis, change control records, and post-market surveillance plan. In FDA-regulated MedTech, this is literally called the Design History File. In banking, it’s called Model Risk Management documentation under SR 11-7. The model is maybe a fifth of what you’re actually building. The rest is the case you’ll need to make to a regulator who has not yet decided to trust you.

Failure modes are first-class architectural concerns, not edge cases. When wrong answers can hurt people, “we’ll handle that in v1.1” is not an answer. The failure mode taxonomy gets defined before the happy path is built, not after. This is the IEC 62304 mindset — every software item gets a safety classification before a single line of code is written. You inherit the discipline whether or not you adopt the standard, because the alternative is discovering your safety class through litigation.

Auditability is non-negotiable. Every AI decision must be reconstructable, not just logged. The difference matters. A log says “the model returned X.” An audit trail says “the model returned X because it received inputs A, B, C; retrieved documents D, E, F from the knowledge base version dated Y; was running model checkpoint Z under prompt template version P; with these guardrails active; and here is the cryptographic evidence that none of this has been altered since.” If you can’t reconstruct it three years later when the case comes to court, you don’t have an audit trail. You have a hope dressed as a log file.

Change is governed, not continuous. The Silicon Valley default is “deploy ten times a day.” The regulated-industry default is “every change to a clinical algorithm requires impact analysis, validation, and possibly a regulatory submission.” When a foundation model vendor pushes a quiet weights update, that is not merely a feature update — depending on the intended use, the risk classification, and the impact on validated performance, it may constitute a regulated change requiring impact analysis, revalidation, and possibly submission review. Most AI vendor contracts don’t even tell you when this happens. That is a procurement problem dressed as a technical convenience.

These five constraints are not bugs to be optimised away. They are the physics of the environment. Trying to build regulated AI without internalising it is like trying to build a bridge without internalising gravity.

Disclaimer (in the middle)

A few things worth saying before going further.

This series is opinionated about the contexts where these patterns matter — production AI in healthcare, banking, insurance, and regulated MedTech, where wrong outputs reach real customers, patients, or transactions. It is not a claim that every AI system needs the full playbook. Internal research sandboxes, exploratory prototypes, and tools used by small numbers of trained domain experts in controlled conditions can reasonably operate with lighter scaffolding. The cost-benefit changes when the blast radius is bounded by scope rather than by architecture.

It is also not a substitute for jurisdiction-specific legal review. Regulatory regimes vary significantly by country, industry, and risk classification. The patterns in this series sit at a level of abstraction common across most regulated environments — but the specific obligations under FDA AI/ML guidance, EU AI Act, EU MDR, RBI circulars, CBUAE regulations, SR 11-7, GDPR, HIPAA, and their many cousins are not interchangeable, and any actual implementation needs counsel who specialises in your specific regime.

What this series is is a synthesis of architectural patterns that keep proving themselves across regulated environments — patterns that map well to most of the major frameworks, even where the specifics differ. Use them as starting points, not as legal cover.

Audience

If you are building AI inside a hospital system, a bank, an insurer, or a regulated MedTech firm, this series is for you. If you are an enterprise architect being asked to put guardrails around a foundation model that’s already in someone’s pilot, it is for you. If you are a CISO trying to figure out what your model risk surface looks like now that half your business units have wired in OpenAI, it is for you. If you are in regulatory affairs and you’ve just been told there’s a new AI feature in the next release and you need to figure out what that means for your submission package, it is especially for you.

If you are reading this and thinking, “We already deployed without most of this in place,” you are not alone. Most enterprises are past the greenfield-design moment. They are dealing with deployed systems, vendor lock-in, and audit questions arriving faster than the architecture can answer them. The retrofit playbook is real, and it is coming.

The shift you are navigating is this: the product is no longer the model. The product is the model plus the evidence that it behaved safely, consistently, and under control. Building for that requires a different set of architectural primitives than building a clever chatbot. The patterns below are drawn from more than two decades of building software in industries — clinical IT, healthcare service intelligence, regulated payment infrastructure — where being wrong is expensive in ways that matter. They have all earned their place by surviving contact with auditors, regulators, and the occasional lawyer.

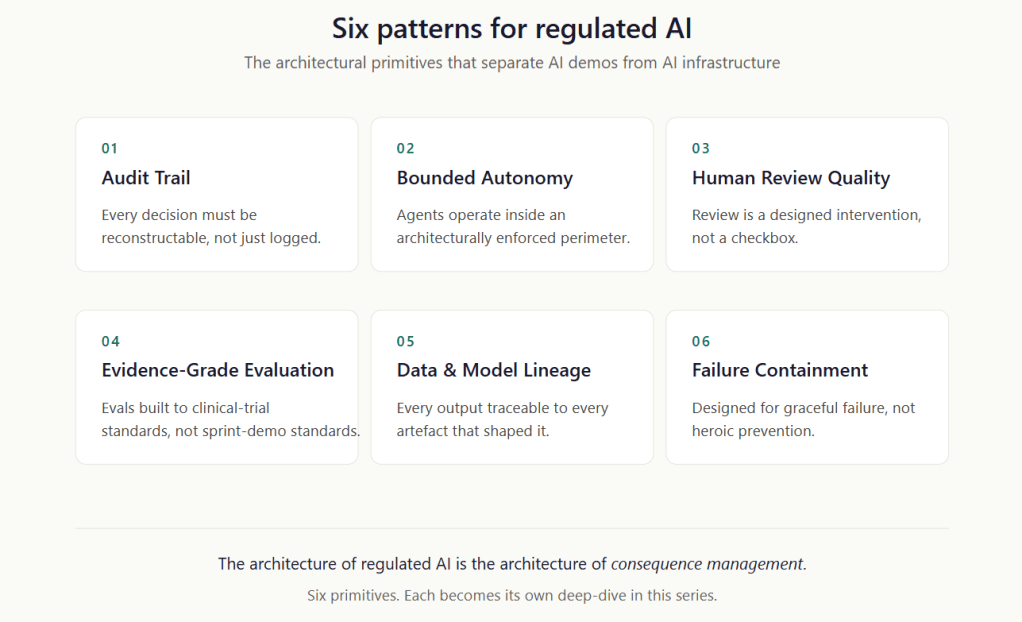

Six Patterns for Regulated AI

The patterns themselves emerged from specific systems: clinical IT, payment infrastructure, MedTech architectures, and knowledge graphs for regulated workflows. Across those environments, six patterns kept reappearing as the difference between AI that ships and AI that survives. Each will get its own deep-dive post in this series, with concrete eat-this-not-that guidance. Here is the map.

Pattern 1 — Audit Trail

Every decision must be reconstructable, not just logged.

The minimum viable audit trail in regulated AI captures the inputs, the model version, the prompt template version, the retrieved context (with knowledge-base snapshot version), the active guardrails, the output, the human review action, if any, and a tamper-evident anchor — typically a hash chain or Merkle anchor written to an append-only ledger — that proves none of it has been altered. Three years from now, you must be able to answer: “Why did the system make this specific decision on this specific date for this specific patient or transaction?” — and back it with evidence.

Pattern 2 — Bounded Autonomy

Agents operate inside an architecturally enforced perimeter.

Most agentic AI demos give the agent the keys to the kingdom and trust the system prompt to behave responsibly. In regulated industries, this amounts to malpractice (a strong statement, apologies). Bounded autonomy means the agent has a hard-coded, externally enforced perimeter on its normal operation: which tools it can call, which datasets it can read, which actions it can take, which thresholds trigger mandatory human review, and what the maximum consequence (financial or clinical) of any single decision can be. The boundaries live in the architecture, not in the prompt.

A payment agent that could move ten million dollars but is architecturally limited to ten thousand without a second human approval is bounded autonomy. A payment agent that’s been told in its system prompt to be careful is a wish.

Pattern 3 — Human Review Quality

Review is a designed intervention, not a checkbox.

“Human-in-the-loop” has become the most abused phrase in regulated AI. It often means a tired clinician clicks “approve” on 200 AI recommendations a day without reading them, or an ops (maker/checker) analyst rubber-stamps fraud flags faster than the model produces them. That is not human-in-the-loop. That is human-as-rubber-stamp, and it is worse than no review because it manufactures a paper trail of false attention.

Human review done right specifies which decisions need review, what information the reviewer needs to make the decision well, how much time they need, what training they need to interpret the AI output, and how the system measures whether reviews are happening with cognitive engagement or in autopilot. If you don’t measure the quality of the review, you don’t have control, only a liability shield.

Pattern 4 — Evidence-Grade Evaluation

Evals built to clinical-trial standards, not sprint-demo standards.

The eval suite that gets your model into a board deck is not the eval suite that gets it past a regulator. Evidence-grade evaluation is structured the way clinical trials are structured: pre-registered protocols, defined endpoints, statistical power calculations, sub-group analysis (does it perform equally well across demographics, geographies, and edge cases?), failure mode classification, and a clear separation between development data and validation data with a documented chain of custody.

If your evaluation can be summarised as “we ran 500 test cases and got a 94% pass rate,” you do not have evidence.

Pattern 5 — Data & Model Lineage

Every output traceable to every artefact that shaped it.

When a regulator asks, “What data trained this model?” the right answer is not “publicly available text from the internet.” The right answer is a documented chain: training data sources with licensing information, fine-tuning datasets with version hashes, retrieval index snapshots with timestamps, prompt templates with version control, and guardrail configurations with effective dates. For every output the system produces, you should be able to walk backwards to every artefact that contributed to it.

This is also where vendor risk lives. If your foundation model vendor cannot tell you what their training data was, you have inherited their problem. In a regulated context, that may be unacceptable. This is why regulated industries are looking at smaller, sovereign, auditable models, even at a capability cost.

Pattern 6 — Failure Containment

Designed for graceful failure, not heroic prevention.

Bounded Autonomy is about the perimeter within which the system operates when things are normal. Failure Containment is about what happens when things are not normal — when the model is wrong, the inputs are adversarial, the data drifts, or the guardrails are bypassed. The two patterns sit on either side of the same coin.

Containment means the system has a defined behaviour when uncertainty exceeds a threshold (refuse, escalate, defer), hard limits on consequential actions (rate limits, value limits, irreversibility limits), detection mechanisms for known failure modes (drift, bias, hallucination, prompt injection), and rollback procedures that work fast — measured in minutes, not change-management cycles.

In MedTech, this is the FMEA mindset. In banking, it is the circuit breaker mindset. In both cases, the assumption is that the system will fail, and the engineering goal is to ensure that failures are detected, contained, and reversible before they become harmful.

Why Now?

Two years ago, the AI conversation in regulated industries was theoretical. Healthcare was watching. Banking was piloting. Insurance was modelling.

That has changed. The FDA now maintains a public list of authorised AI/ML-enabled medical devices that has grown into the many hundreds and continues to expand. Agentic payment and operations workflows are moving from controlled pilots toward supervised deployment in regulated banks. AI-assisted underwriting is being approved by insurance regulators, with conditions. The demos are becoming products. The products are becoming infrastructure. The infrastructure is now being audited.

And the playbook for how to do this safely, at scale, with evidence — that playbook is mostly being written behind NDAs, inside large enterprises, by teams who don’t have time to talk about it. The publicly available AI commentary continues to be dominated by use cases where the cost of being wrong is a refund, not a recall.

This series is an attempt to fill some of that gap. Not exhaustively — no series can — but with enough specificity. The bridge between AI demos and AI infrastructure runs through these six patterns. The teams that build the bridge will earn the right to ship AI into the systems that matter. The patterns are how you build the bridge.

The rest of this series will go deep on the six patterns — and close with the retrofit problem most enterprises eventually face:

- Post 2 — The Audit Trail That Holds Up in Court. What to capture, how to anchor it, what tooling actually works, and the eat-this-not-that of audit architecture.

- Post 3 — Bounded Autonomy: Building the Cage Before You Build the Agent. Architectural patterns for blast-radius control, with worked examples from payment workflow design.

- Post 4 — Human Review, Without the Theatre. How to design review steps that survive a deposition.

- Post 5 — Evals That Pass Regulators, Not Just Demos. Borrowing from clinical trial methodology to build evidence-grade evaluation pipelines.

- Post 6 — Lineage as a First-Class Citizen. Tracking every artefact that shaped an AI output, from training data to the prompt version.

- Post 7 — Designing for Failure Before You Design for Success. FMEA-thinking for AI systems, with a containment pattern catalogue.

- Post 8 — When You Inherit the Problem. The retrofit playbook for AI systems already in production — vendor lock-in, missing lineage, contractual indemnities, and what to do when the business won’t let you turn it off.

Each post will be opinionated (sorry), specific, and prescriptive. Less “it depends,” more decision patterns, trade-offs, and concrete defaults. Vendor-agnostic by default. Just the patterns that have worked — and the ones that have failed — in the kinds of environments where being wrong has lawyers attached.